Introduction

Are you ready to go down the rabbit hole? To visit a surreal world, where black is white and white is carrots?

A friend, Metacognician in Shanghai, describes the situation as follows: “Life is more absurd than movies. I've gone down the rabbit hole too, when it just becomes more and more strange and you wonder how that all is supposed to make sense.” I asked him if I should just embrace it. He answered, “Why should you ... change the universe?”

It started with a psychotic named Jim Kiraly who resides, we think, at 6329 Twinberry Circle, Avila Beach, California.

Jim Kiraly is a respected citizen. A churchgoer. A Vice President of Transamerica Corporation. And a violent abuser who tried to use an emergency anti-violence measure, one intended to protect battered women, to stop his victim in a wheelchair from writing a book.

Concise enough?

:)

For attorneys: Jim Kiraly filed for CLETS against his son and victim, who lived 200 miles away, did not own a car, and was in a wheelchair. His son and victim was not asked to end communications. Jim had no (zero) specific and relevant allegations that were not perjury. But he turned down repeated offers of no-contact and a signed stipulation that gave him everything but CLETS. He insisted on CLETS if his victim ever once “discussed” him with third parties.

In the end, Jim Kiraly signed an agreement far weaker than the ones he'd been offered.

A review of Court paperwork and other materials will tend to

confirm that Jim and other parties, including attorneys on all

sides, committed multiple felonies, crimes, and faux pas.

:P

The word “abuser” is stated here publicly and without equivocation. A formal offer is hereby made to reaffirm the word in writing and under oath. Attorneys will understand the significance of the point. In short, there is little terror of a threatened defamation suit on this side. Actually, we feel that such a suit will fit nicely up Jim Kiraly's abuser ass.

Jim has one son, Ken Kiraly, who invented the Amazon Kindle and is one of the leads at Amazon's secret Lab126. Another son, Tom Kiraly is one of the leads, a Vice President-CFO type, at medical insurance firms, including one of the largest, Humana Corporation.

These people and some of the biggest names in Silicon Valley legal circles have committed or are involved in multiple crimes.

For the next decade or two, we're going to explore the crimes that these people committed, the motivations and the denial involved, the background and histories that led each person to make the choices that they did, and ways to build upon what happened and move towards positive societal goals.

There's plenty to go over. These people committed or were involved in: Spousal abuse, child abuse, DDOS (a highly prosecutable violation of CFAA), extortion, perjury, conspiracy to commit perjury (a possible felony), false police reports, conspiracy to file false police reports (a possible felony), unlawful threats, barratry, defamation, malpractice, civil harassment, criminal harassment, abuse of process, and violations of SCCBA Professional Standards.

The point was to force Jim's oldest son and victim, me, to sign a gag order. I was in a wheelchair. I'd never made a single inappropriate threat against my abuser. I wasn't even asked to not to call anybody. But Jim threatened to put me in a violence database unless I agreed never to write about him.

I won the right to write, but I lost my home of 25 years, most of my possessions, my chances for retirement, everything. Everything but a realization.

I can make a difference. I can conduct research for legitimate and reasonable purposes, document what happened, and analyze the choices of the people involved:

- Jim Kiraly, abuser. Possibly Treasurer at St. Johns Lutheran Church. Vice President of Transamerica Corporation. Also connected to New Life Pismo Church. Involved with Service Core for Retired Executives (SCORE).

- Grace Kiraly, abuse victim and Christ Follower.

- Tom Kiraly, abuse victim, VP or CFO of firms such as Hanger Inc., Humana Corporation, and Sheridan Healthcare.

- Gail Cheda, slightly demented Realtor, spittle flying.

- Ken Kiraly, abuse victim, inventor of the Amazon Kindle, lead at Amazon's secret Lab126, sociopath.

- Tom Stutzman of Thomas Chase Stutzman, a Family Law attorney whose hobbies include martial arts and alleged sexual harassment

- John Perrott of Thomas Chase Stutzman, a personable albeit lazy Family Law attorney who has a slight tendency towards fraud and malpractice

- Chris Burdick, head of the Santa Clara County Bar Association (SCCBA). Chris, you broke a written promise to speak with me because, you said, we had “Prior...” You didn't finish the sentence. Were you worried that I might take false statements to the State Bar? What's the deal with you and Hoge Fenton, anyway? What will we find if we dig?

- Michael Bonetto of Hoge Fenton. Michael, seriously, what are you?

- Alison Buchanan of Hoge Fenton, ethics specialist. Alison, did you contribute to the SCCBA Professional Standards, or was that before your time?

- Tracie Zerr of Thomas Chase Stutzman, a woman of boundless intelligence and sensitivity.

- Maggie Desmond of Hoge Fenton. Maggie, information, please. What is your role in Hoge Fenton's campaign to hush victims of abuse? When the clients that you've protected beat up women, how do you compartmentalize?

Maggie told me that she didn't know what she could say to me about what happened. However, we have decades to work it out. It will be productive. I'd like to direct the attention of attorneys and other parties to the:

Legitimate and Reasonable Purposes List

Questions or comments are welcome. For technical notes and disclaimers, click here.

Free Downloads

|

The current free ebook is located at this link: For details about the ebook, click here. |

130928 Saturday — Linux and Windows Together Again

Tags:

linux machine tech virtual vm windows

A full Kiraly Cases tags system will be added in 2014.

|

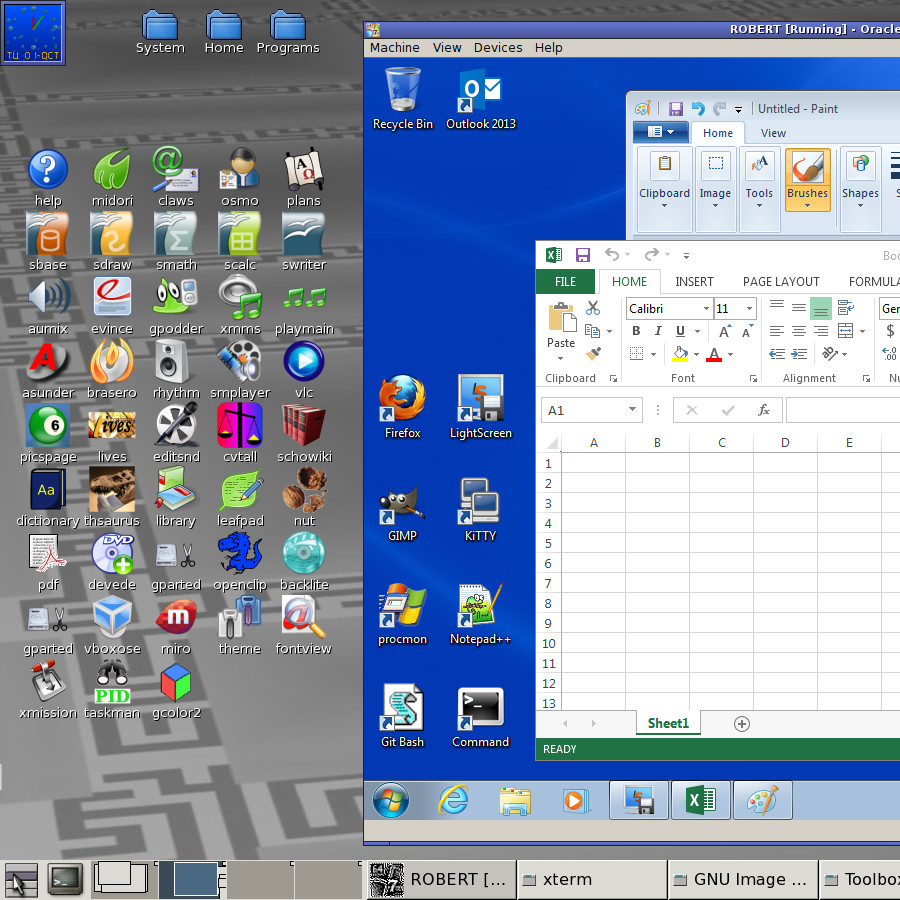

130928. This is a technical post intended for Linux and/or MS-Windows engineers. 1. Overview: In September 2013, OldCoder converted a Windows 7 Professional, 64-bit release, laptop into a virtual machine (or VM) based on Oracle VirtualBox. This post explains how the conversion was done and discusses related points. The conversion took place in a corporate environment that included a Windows Domain (a particular type of LAN) and an I.T. department. If you attempt something similar at home or in a situation that does not include a Windows Domain, you may be able to disregard related points below. Terminology:

2. Virtual Machine positives and negatives: This section discusses positive and negative points related to a conversion of this type. Virtual Machine negative points: 2.1. This approach requires increased system resources. In particular, extra disk space and RAM. Additionally, the CPU horsepower available to the Linux and Windows machines individually is less than the CPU horsepower that would be available to a single unified machine. 2.2. It is crucial that Virtual Machines be backed up on a regular basis. Glitches on the VM host side can corrupt VM guests beyond recovery. Virtual Machine positive points: 2.3. The VM approach provides the equivalent of disk image backups. Such backups may greatly speed up recovery from Windows malware attacks. 2.4. One difference between VMs and disk image backups is that you can run VMs without “restores” and/or the need to overwrite the current contents of a hard disk. 2.5. Under the VM approach, you can run multiple different laptops on a single physical machine. Extra laptops might include, for example, copies of old laptops with legacy development environments. Or, alternatively, past versions of a current laptop. 2.6. If a Linux system is used as a VM host, the VM approach gives you more or less the equivalent of two separate machines, one running Linux and the other running Windows, that can share a laptop's display, keyboard, and DVD drive. There's no need to reboot to switch between OSes. They're both present concurrently. 2.7. The two machines can share a clipboard. In other words, you can copy and paste text between them. 2.8. The two machines can both use a Windows Domain and/or the Internet. They have separate IP addresses at the Windows Domain level. 2.9. You can browse the Web safely in Linux while working in Windows. It all appears on the same display unless you elect to run Windows in fullscreen mode. 2.10. With some extra steps, discussed in the “Guest Additions” section below, the two machines should be able to share one or more disk folders. As a related note, shared folders should make it possible for you to download files safely from the Web to Windows. The basic idea is that a Linux browser can be used to download files from the Web to a shared folder. Windows sessions can pick up the files from there. 2.11. You can shift CPU cores back and forth between the two machines. 2.12. If a VM host has a USB3 port and a USB3 external drive, and if a VM guest is stored on the USB3 external drive in VDI format (as opposed to VHD or other formats), it may be possible to run the VM guest directly from the USB3 external drive. This is a useful feature. Warning: If attempts to do this fail, the result may be a corrupted VM guest. Backups are recommended before such attempts are made. 3. Main conversion procedure: This section outlines the key steps that were involved in the conversion. It is not a detailed guide. Starting points: 3.1. The laptop was a relatively new Dell Inspiron with a 500GB internal hard disk, four CPU cores (64-bit), and about 6GB of RAM. Note: These are probably minimum requirements for the type of conversion discussed here. 3.2. The laptop had two USB3 ports. Note: For others who wish to try this conversion, laptops with one or more USB3 ports are strongly recommended. This will facilitate backups. However, USB2 ports will also work, though backups will be slower in this case. 3.3. OldCoder's I.T. department installed a fresh copy of Windows 7 Professional, 64-bit release, on the laptop and added a number of standard packages, including (for example) Microsoft SQL Server and Outlook 2013. 3.4. The virtual machine (or VM) software used was Oracle VirtualBox 4.2.18 (Open Source Edition). 3.5. The host OS (i.e., the OS which ran the VM software used) was a Linux distro of OldCoder's own design. He didn't install the distro initially; he booted it from a USB device. A number of standard Linux distros would have worked as well. Outline of procedure: 3.6. Turn off Windows Update. To do so, use: Start -> Control Panel -> System and Security -> Windows Update -> Change settings -> Never check for updates As a related note, Windows Update is bad. 3.7. Download a freeware MS-Windows utility known as disk2vhd and run the utility under MS-Windows. Use it to create a VHD file stored on the same disk as MS-Windows. The size of the VHD file may be in the general range of 40GB to 85GB. 3.8. The Linux distro used may be a 32-bit release. However, if so, the kernel used must have been built with PAE support. Note: This is true of many recent Linux kernels but not all of them. 3.9. Boot Linux. 3.10. Load both the standard VirtualBox kernel module vboxdrv and the additional VirtualBox kernel module vboxnetflt. Additionally, set up Linux so that both modules will be loaded automatically on future Linux boots. Details are beyond the scope of this document. 3.11. Run VirtualBox. Create a new VM. When you do so, set Type to Microsoft Windows and Version to Windows 7 (64-bit). If you are not using Windows 7 (64-bit) substitute the appropriate setting. 3.12. After the VM is created, use Settings to adjust its parameters as follows: * Set Display -> Video -> Memory to 128 MB * On Display -> Video turn both 2D and 3D acceleration off * On General -> Advanced set Shared Clipboard to Bidirectional * On Network -> Adapter 1 set network interface equal to the network interface that Linux is using * On the same screen, set Attached to equal to Bridged Adapter * On System -> Motherboard set RAM to at least 3584 MB and preferably more (see below) * On the same screen, make sure that Enable IO APIC is turned on * On System -> Processor set number of processors to about half the number of processors that the VM host has (but at least one) If you aren't able to set RAM to at least 3584 MB, the conversion may not be possible. 3.13. Attach the VHD file created previously to the VM and boot the VM. 3.14. Network setup in the guest OS, i.e., Windows 7, is largely beyond the scope of this document. As a rule, in a corporate environment, the assistance of the I.T. department will be needed if Windows Domain and/or Internet access is to work correctly. However, some network-related steps are discussed in the sections that follow. 3.15. If the VM copy of Windows contains a folder named C:\Windows.Old at this point, the folder may be deleted. 3.16. Set Display Resolution in the VM copy of Windows 7. Try using the highest resolution whose dimensions do not exceed the dimensions of the resolution used by the VM host display. To set Display Resolution, use: Start -> Control Panel -> Hardware and Sound -> Display -> Adjust resolution 3.17. The conversion of Windows 7 into a VM may trigger Microsoft anti-piracy software; specifically, Windows Genuine Advantage or its descendants. Watch for messages about countdowns and/or “activation”. If such messages appear, this has probably happened. The problem is especially likely to occur if a version of Windows 7 created by a laptop manufacturer is used. Such a version of Windows 7 will often decide that the VM in which it finds itself is not a laptop of the proper model. If this issue arises, and you are in a corporate environment, the I.T. department may be able to provide registration keys that will satisfy Windows Genuine Advantage. Other solutions are possible, but they are beyond the scope of this document. 3.18. If Windows 7 is up and running in the VM guest, and seems to be in an acceptable state, shut down Windows 7. Then convert the VHD file which you now possess into a VirtualBox VDI file. To do so, use a CLI command similar to the following: VBoxManage clonehd input.vhd output.vdi --format vdi Use md5sum or preferably sha1sum to generate a checksum file for the VHD file. Move the VHD file and its checksum file to offline storage. Remove the VHD file from the VM configuration. Attach the VDI file in its place. When you do this, VirtualBox may complain that it can't add the new disk due to UUID conflicts. If this happens, the old disk has not been removed completely from the configuration. The steps needed to correct this are beyond the scope of this document. The reasons for converting VHD to VDI (which is a VirtualBox specific format) are that VDI may be faster and more robust than VHD. 3.19. After the VDI file is added successfully to the VM guest as a disk drive, proceed to use the VM guest as a Windows 7 machine. 3.20. Periodically, perhaps somewhere between daily or weekly, shut down Windows 7, close VirtualBox, and make back-up copies of the VDI file. As a rough rule of thumb, if the VM host has a USB3 port and a USB3 external drive, assume that backups may proceed at a rate of about two gigabytes per minute. For USB2 ports or drives, the speed may be much lower. 4. Setting up shared folders: 4.1. You may use to add VirtualBox Guest Additions to each Windows 7 VM guest that you create. If you do so, VM guests will be able to share one or more folders with the associated VM host. 4.2. There are two ways to start: 4.2a. In the menu bar for a running VM guest session, Devices -> Install Guest Additions may work. This approach requires a fast and reliable Internet connection. It tries to download a CD ISO from the Internet, mounts the CD ISO on the VM guest, and runs driver installation software. 4.2b. Alternatively, you may try going to this site on the Internet: http://download.virtualbox.org/virtualbox/ If the site is there, go into the subdirectory corresponding to the release of VirtualBox that you are using, locate a CD ISO file with a name similar to: VBoxGuestAdditions_numbers.iso Download the CD ISO, mount the CD ISO on your VM guest, and run the setup program that you will find on the mounted CD ISO. 4.3. After the Guest Additions are installed in a VM guest, restart the VM guest in question. 4.4. Create a folder with the pathname /vmguest in the VM host. Set the permissions for the folder to octal 777. Then use VirtualBox -> Settings -> Shared folders -> plus-symbol to add the following entry under Machine Folders:

Folder path: /vmguest 4.5. In the VM guest, proceed as follows to map a drive letter to the host folder in question. Note that drive letters may be set up as either temporary or permanent: Start -> Computer -> Network -> VBOXSVR -> Right click on share -> Map network drive 4.6. If at a later date you decide to use the VM guest under VM software other than VirtualBox or the release of VirtualBox that you started with, uninstall the VirtualBox Guest additions: Start -> Control Panel -> Programs -> Uninstall a program -> Oracle VirtualBox Guest Additions 5. Tips related to VM operation: 5.1. It is recommended that you usually shut down a Windows 7 VM guest when you will not be using it for an extended period, as opposed to leaving it running or doing the equivalent of a VirtualBox “suspend” operation; i.e., “saving a snapshot”. Shutting down the VM guest may reduce the chances of corruption. 5.2. Oracle VirtualBox provides a meta-key which is referred to as the Host Key. To determine the key that is used as the Host Key and/or to change the key in question, use: VirtualBox -> File -> Preferences -> Input -> Host Key 5.3. To send Control Alt Del to a Windows 7 VM guest, press the VM Host Key and the Delete key simultaneously while the VM guest has keyboard focus. 5.4. Some VM guest settings can be changed while a VM guest is running. Some can only be changed while the VM guest is shut down. If the VM guest is not shut down, the latter settings will be greyed out. 5.5. If you have more than two CPU cores in a VM host, you should normally assign at least two of the CPU cores to a VM guest before you attempt significant installs or compiles in the VM guest. Possibly more, though at least one CPU must be reserved for the VM host. Note: The VM guest will need to be shut down before the number of CPU cores assigned to it can be changed. 5.6. If a Windows 7 VM guest has keyboard focus, and things are working correctly, the “Windows” meta-key on a laptop keyboard should be passed through to the VM guest and work normally for Windows 7 purposes. 5.7. If you make a back-up copy of a Windows 7 VM guest VHD or VDI file, you may wish to make a back-up copy of the directory tree $HOME/.VirtualBox at the same time and store the two backups together. 5.8. If you switch from one network adapter to another in a VM host, note that you must make a corresponding change at the VM guest level. 6. Remote Desktop in a Windows Domain: If you're in a Windows Domain and an I.T. person or somebody else needs to “Remote Desktop” into the VM guest discussed previously (for example, to install software), try the following procedure. This procedure is not guaranteed to be complete or correct. Changes will probably be needed. This procedure assumes that you have I.T.-level authority for the Windows Domain or an I.T. person with such authority will be assisting you. If this condition is not met, stop here. 6.1. Turn off the VM host firewall temporarily. You may be able to add firewall exceptions instead, but such exceptions are beyond the scope of this post. 6.2. Turn off Windows Firewall temporarily in the VM guest. To do so, use: Start -> Control Panel -> System and Security -> Windows Firewall 6.3. Make sure that Windows Computer Name and Domain Membership are set up correctly. If you are in a corporate environment, the I.T. department will probably need to be involved in this step. It might go something like this: Start -> Control Panel -> System and Security -> System -> Computer Name -> Change -> Computer name: BaconComputer -> Member of: Domain -> BaconDomain The Bacon names above and below will usually be specified by the LAN administrator. 6.4. Relax the Remote Settings parameters: Start -> Computer -> Right Click -> Properties -> Remote Settings -> Allow Connections from Any 6.5. Add the appropriate person to the appropriate group. Substitute the domain name for BaconDomain and the other person's domain user name for EggsName. Start -> Computer -> Right Click -> Manage -> Local computers and groups -> Groups -> Remote Desktop Users -> Add BaconDomain\Domain Admins -> Add BaconDomain\EggsName 6.6. Log out of Windows 7. Have the other person “Remote Desktop” in and do whatever work is necessary. While this is happening, you should remain logged out or you may disconnect him or her. 6.7. Turn Windows Firewall back on in the VM guest. 6.8. Turn the VM host firewall back on. |

130928 Saturday — I See IRC: Mother Earth

Tags:

irc

A full Kiraly Cases tags system will be added in 2013.

|

130928. A chat with Skittles. John Perrott and Tracie Zerr, Family Law, San Jose, this kid is the one from this Spring again. Do you understand what the games might have cost the world?

<Skittles> I try to get rid of any thoughts of my mother

<Skittles> Care to tell me about the relationship between you

and your parents?

<OldCoder> Skittles, read the blog

<Skittles> too long, i prefer conversation

<OldCoder> It happened the night of March 31, 2008

<Skittles> Well, not literally, I hope

<Skittles> stay for a while

<OldCoder> It is not my decision. It is their

“choice”.

<Skittles> I walked to the highest peak, of the

tallest mountain, to meet the past, but when I arrived,

it was already gone.

<Skittles> It had left long before I began my journey,

you see.

<OldCoder> Your perceptions seem sharp

<OldCoder> You are a kid finding his way

<Skittles> I've found the path, and saw my shadows

a couple days ago. Now I know where the light is.

<Skittles> If the light is in front, your shadows

are behind

<Skittles> I won't be able to help others, until I

reach the end of my path successfully.

<OldCoder> Will you?

<Skittles> I hope there is an after life

<OldCoder> You will not. Nor will the Christ Followers. |

130928 Saturday — Apotheon says Shells are Hells

Tags:

shell tech

A full Kiraly Cases tags system will be added in 2013.

|

130928. Shells are a standard type of software that developers use. Apotheon, a developer with considerable experience, offered some thoughts about shells a few days ago. I suggested that he write an essay on the subject, he wrote the essay, and here it is. The essay includes an acknowledgment to me and I'm pleased to see it. But the text does not reflect my own views on Bash. I feel that Bash is a Smash. Regardless, this is an interesting article. The author's own site is presently located at http://blogstrapping.com/ and the essay is distributed under the Open Works License. For more information about the license, visit: http://owl.apotheon.org/ ## Bash Considered Pointless (Or: Pointless Bashing) by Apotheon; September 2013 The purpose of this essay is to make some points about interactive command shells and scripting: 1. Interactive command shells should be optimized for use as interactive command shells. 2. Programming languages should be optimized for programming. 3. These targets often conflict. Pick one target when designing a tool. That target should be ascendant. 4. Given that tools should be optimized for their core purposes, use the best tool for the job. 5. Bash is pretty much never the best tool for the job. I will address these issues by commenting on particular use cases and criteria used to select shell and scripting tools. ### Interactivity There are two major types of interface people tend to use in the Unix world: graphical user interface (or GUI) and command line interface (or CLI). One of the many reasons Unix-like operating systems can be great is the fact that both of these interface styles are not simply available; they are flexible and powerful, and they can be combined in beneficial ways. My personal favorite approach to user environment design is to use a tiling and tabbing window manager within which I'm probably using a small number of GUI applications and a large number of terminal emulators at any given time. Your mileage may vary, and the customizability of the user environment in Unix-like systems ensures that you can choose a different arrangement of these UI styles to get maximum mileage out of the system. I assume readers of this essay will agree that UI customizability is a good thing, at least up to the point where your user environment starts making excessive trade-offs of other benefits for the customization you get. Given that customizability of user interfaces is a good thing, consider the options for shell customization. The most obvious criteria that come to mind regardig how a shell might be selected look like this:

* customizability The most popularly used shells in the Unix world, as far as I'm aware, are:

* Bash fish is not one of the most popularly used shells in the Unix world (or anywhere), but it is included here for reasons that may become clear later on. Note: I'm intentionally avoiding shells that are not available for installation more or less “everywhere” — using my own admittedly biased definition of “everywhere”. “sh” in this case refers to any of a number of different shells roughly complying with the Open Group's “Single Unix Standard” specification of a shell that must be available on any OS that can be certified as complying with that standard and, thus, eligible for certification to use the UNIX trademark (note the all-capital letters), where such shells reside at /bin/sh in the system's filesystem. While a given Unix-like operating system's developers and distributors may or may not particularly care about SUS certification, this particular part of the standard is pretty important to ensure portability of common shell scripts (I'll come back to that later), so pretty much every system with any pretentions of being Unix-like comes with an implementation of sh. I'll mostly just refer to that class of shells as though it is a single shell here, for brevity's sake. Now that we've established some criteria for selecting the shell you wish to use interactively, a lineup of shells from which to choose, and the idea that customization for user interfaces is generally a good thing, we can get down to the nitty gritty details of what shells to use. Keep in mind that many of the evaluations of different shells below are based on my own anecdotal experience, so take that for what it's worth, try shells out to get your own experience with them as much as you feel the need, and form your own conclusions. If you have some relevant information that can help me refine my understanding of some of these issues, click here to let me (Apotheon) know. #### Customizability There are varying levels of customizability within each shell, generally accomplished by editing a configuration file such as ~/.shrc, ~/.cshrc, or something similar. In fact, depending on the specific configurations you want, you may have two or more different configuration files. The least configurable option is probably sh, shortly followed by csh. Moving from most customizable to least customizable, the overall ranking looks something like this:

1. fish The customizability of anything other than the C shell and sh is enough to suit most people's needs, as simply choosing one of these shells is itself probably the most significant shell customization choice to make at this point. The first two options in this list, fish and the Z shell, are so much more customizable than any of the alternatives that they stand alone in a class by themselves. Those two offer so much customization that people who prefer one of the other six shells might consider it too much. I consider it “too much” myself because getting that much customization out of it comes at the cost of making the shell implementation bigger, and thus buggier, less stable, and slower. #### Dependencies Having many, or large, dependencies can be problematic for a shell. It tends to result in poor stability, for instance, as changes to any of the dependencies (or breakage of them) can break the shell that depends on them. One of the reasons that some Unix-like systems default to sh as the standard user shell for the root account is the fact that, if some mounted filesystem that contains some dependencies does not mount properly or otherwise fails, /bin/sh is probably still on the root filesystem and working fine. I'm not aware of any significant dependencies for any shells in that list other than Bash, fish, and the Z shell. All three of these depend on iconv, which is a problematic dependency at times. The Z shell nominally depends on ncurses, though I think it can build without it and still be an amazingly useful shell, but ncurses is not the most horrible dependency in the world anyway and tends to be stable. Both Bash and fish also depend on gettext, which is bloated, buggy, prone to instability problems on upgrades for large numbers of other programs, and generally something I would like to avoid. You may not consider these dependencies problematic for an unprivileged user account, as long as you do not use any of these shells for the root account, though you should know that in many Linux distributions the implementation of sh located in /bin/sh is really just a symlink to the Bash executable file, most likely executed in a sh-compatibility mode. #### Features There are three big reasons to consider the availability of features in a shell. One is that you may consider some features non-negotiable for your interactive command shell of choice. The C shell and tcsh, for instance, lack an easy way to use Unix pipeline redirects separately for the STDERR and STDOUT streams. It can be done, but deepending on how you wish to redirect them, separating STDERR from STDOUT can get pretty ugly. Other bits of functionality whose absence you may regard as deal-breakers can also come into play when choosing a shell, of course, and apart from the csh family, the usual loser here is sh. Another is that you might really want some set of features that are nice to have, but not necessarily non-negotiable. For these purposes, some shells may have some of the features you want, and others may have different features you want, so you may have to make a sacrifice of some for the sake of others. The most likely shell to give you all the options you could want is probably the Z shell. There are some features that only fish offers, though most people who know enough about shells do not care about the features only fish provides (such as a web interface for color configuration — yes, really). #### Licensing Of the listed shells, there are basically four options for general licensing. Bash is strongly copyleft-licensed, under the terms of the GPL (version 3, as of this writing); fish is also GPLed, though I'm not sure what version of the GPL it uses. The public domain Korn shell implementation, pdksh, is nominally dedicated to the [public domain] (http://copyfree.org/public). Some jurisdictions (France is one example) do not recognize the ability of a copyright holder to dedicate something to the public domain before its normal legal term of copyright expires, and there are some questions about the legal effectiveness of dedicating something to the public domain even in places that are generally regarded as allowing public domain dedication, so there may be some issues with the “public domain” status of pdksh, I suppose. Worse, pdksh reportedly includes some GPLed components, even if the main pdksh project is nominally dedicated to the public domain. Step warily, I suppose. The standard shell, sh, is actually a bunch of different implementations of roughly SUS-compliant shell, which may vary widely in licensing. Check with your favored operating system's sh implementation for license terms. Everything else is distributed under some flavor of copyfree license. This may be important to you, depending on any desire to redistribute, your licensing preferences, your use case, and your working conditions. I prefer copyfree licensing, and my favorite shell options at this time fall within the copyfree licensed options, so I'm pretty happy in this regard. #### Resources CPU, RAM, and storage efficiency are the three main resource usage concerns. In at least some implementations, sh should be the lightest weight in two or three of those categories (though probably not where sh is just a symlink to Bash, of course). I would guess that fish lies at the opposite end of the spectrum, and is probably a worse efficiency offender than all the rest of the options. I have, unfortunately, not done any extensive benchmarking (or any at all, really), but from what I have heard mksh is (surprisingly) smaller on disk and in RAM than tcsh, which is itself a pretty lightweight shell, I think (though the C shell is even lighter than tcsh, and I imagine should be lighter than mksh), and the Z shell seems to be a significantly more gluttonous consumer of these resources than mksh and tcsh. If I had to guess, just based on the stuff each shell does, I would say that Z shell configured to do a lot of nifty dynamic stuff, making effective use of its many features and high level of customizability, can probably use more CPU resources than all the rest of them (except perhaps fish, especially when the browser is used for some configuration tasks). Given its dependencies and my experience with it and with GNU software in general, I would be surprised if Bash was not the most egregious RAM and storage consumer (except possibly fish, again, though I think Bash still might beat it). Outside of (perhaps pathological) highly dynamic configurations for the Z shell, though, it is entirely possible that Bash is the worst offender for efficiency in all three of these categories. #### Responsiveness How “snappy” an interface feels to the user — how quickly it seems to react to input, and provide expected output — is an important criterion in software selection for most users. In the case of shells, any discernible hesitation can be a fatal flaw in some users' estimations. I have not actually had the dubious pleasure of developing any meaningful experience with fish in this regard; it does not offer anything of sufficient interest for my preferences to justify giving it any really meaningful evaluation in actual use. There are two other shells in the list examined here that differ enough from the rest of them in this regard to justify special mention, however. In my rather extensive use of the Z shell over a period of around two years, I found that it hesitated a little bit on a semi-regular basis. I found this somewhat distracting sometimes, and rather annoying. It was not a lot, but it did hesitate often enough for it to be regarded as hesitation “on a regular basis”. When I deleted that operating system off my laptop and replaced it with a fresh FreeBSD install, I stopped using the Z shell, in large part because of that one factor (and in some small part because I was comfortable with tcsh for most purposes, having chosen FreeBSD as my preferred OS for several years by that point, and in some slightly less small part because I wanted to give mksh a try, which I had not yet really used with any seriousness at that time). I also have rather extensive experience with Bash as an interactive command shell, both as my first user shell on Unix-like systems quite a few years back, and as the initial shell I used as my primary user shell on the system where I ended up using the Z shell instead after a little while, among other brushes with it. What my experience of Bash has taught me about responsiveness is that it is dog-slow. Hesitation does not just occur “on a regular basis”: it is the norm with Bash. In addition to being very frequent, it is also often pretty significant when it happens, much worse than I have experienced with the Z shell, even on the same laptop with less software installed (and this is not some circa 1999 system, either: it is a Core i5 system with four gigabytes of RAM). Apart from web browsers and PDF readers, it is easily the slowest piece of user-facing software I have experienced on this laptop. All the rest of the shells I have used are much quicker — even MS Windows shells like cmd and PowerShell, or various programming language REPLs. I, for one, find Bash performance pretty intolerable, though I suppose I may simply have a very low tolerance level. I'm sure that many Bash aficionados never even notice the kinds of hesitations that drive me batty; they would, likewise, surely not notice the hesitations I have experienced in Z shell, and would therefore find the “responsiveness” criterion for shell selection irrelevant in all ways. #### Syntax There are two major syntactic styles in the shell world. One is the csh style, and the other is the sh style. People can argue endlessly about which is better. I happen to have a (marginal) preference for the csh style, though that preference is not strong enough to overcome other considerations that prompt me to choose a shell from the sh family. The options for csh syntax include the C shell, the basic option for that style (and the shell referred to by “csh”), and tcsh, its slightly more advanced and feature-filled descendant. The options for sh syntax include everything else in that list. I glossed over something a little bit. There is one shell that fits neatly in neither category: fish, also known as the “friendly interactive shell”. Its syntax is largely sh-style, but it has some not-strictly-compatible differences. #### Ubiquity Some people make an argument to the effect that, if some tool is “everywhere”, they should get very familiar with that tool so they will never lack for the ability to make the most of the tool. For instance, many vi users (usually actually Vim users, but close enough for these purposes, mostly) refer to the ubiquity of vi on Unix-like systems as one strong reason to prefer it over something like GNU Emacs. Some people make a similar argument for their favorite shell choices. The C shell is on every default install of a FreeBSD system. A Korn shell implementation is on every OpenBSD shell by default. Bash is on the vast majority of Linux distributions by default, and on Apple MacOS X; it even comes with some Unix emulation environments for MS Windows. So far, it sounds like the winner of the ubiquity argument is Bash -- right? That is also the most vocal user of the ubiquity argument that I have observed. Of course, the one shell option more ubiquitous than Bash is sh. It's on various BSD Unix systems and every Linux distribution I have encountered (even if only in the form of a slightly behaviorally modified symlink to Bash). If you have a Unix-like system, the chances against it being absent are *overwhelming* for any random one of you reading this. Apart from that, the ubiquity argument is pretty much null and void for plain ol' unprivileged user interactive command shell selection. See above, regarding the value of customizability for user interfaces. #### Conclusions If you want something with lots of features and heavy customizability, use the Z shell (or maybe fish). If you'd prefer something that avoids dependency hell, avoid Bash and possibly fish. If something whose licensing terms will not impose restrictions is desirable, avoid Bash and fish (and maybe pdksh). If you want something efficient, lightweight, and snappy, avoid Bash and the Z shell (and fish). If you want something with csh syntax, use the C shell or tcsh. If you want something found *everywhere*, use sh. You may notice three things here that are interesting: 1. You should almost certainly avoid fish, period. 2. I tend to mention fish in parentheses, as an afterthought. See the note about this shell below. 3. About the only reason to use Bash that remains is either ideological devotion to the GNU/GPL/FSF orthodoxy or simply not wanting to make any decisions about what shell to use when using one of the vast majority of Linux distributions. There is one other argument that might be raised: scripting. I will address that below. In short, if you care at all about shell choice for reasons other than wanting license incompatibility problems and the GNU Project to take over the world, and you are currently using Bash, it is time to learn a different shell for interactive use. While my current favorite is mksh, your preferences and needs may vary substantially, and I understand that and respect well-reasoned choices that differ from my own. You should use what suits you best. I just believe that, for any practical, technical reasons that might apply, Bash is never the right answer. #### A Note About The Friendly Interactive Shell I'm not entirely convinced that fish is not an elaborate prank. I have a difficult time imagining anyone who knows and cares much about the command line environment choosing fish, and I have a difficult time imagining anyone who does not care much about the command line environment having any particular motivation to make a conscious shell choice at all. This makes it, at most, seem like a weird trap for people who care a lot about the command line environment, based perhaps on some misguided urge to achieve “leet” status of some kind, but do not actually know much of anything about the command line interface in general and shells in particular. I suppose fish might be the result of some kind of misguided, amateurish project gone awry and spinning out of control, perhaps a bit like PHP in that regard, but I'm not convinced fish is not simply a joke. #### A Note About The Public Domain Korn Shell I'm not familiar with the ins and outs of pdksh, and as such I do not really know what technical benefits might prompt one to choose it over pdksh. That decision is, at this time, left as an exercise for the reader. ### Scripting There are basically three kinds of shell scripts you might write: One is the kind that will never be used anywhere else — probably not even on another computer you own. This will almost certainly be a script that you only need a handful of times, and maybe only once, which is probably a known condition when you first write it. The second is the kind that will, in some form, end up being used somewhere else, because the problem it solves is general enough that it will be useful in circumstances other than the immediate here-and-now use. In that case, you should to some degree optimize your scripting language choice for portability. The third is the kind that really should be a program, and not a shell script. For these purposes, when I say “script” I mean something that just automates the performance of tedious tasks, where a “shell script” is a script that automates tasks performed in the shell; when I say “program” I mean something that performs nontrivial operations involving data, algorithmic logic, and other tasks outside of typical shell usage, assuming a fairly low bar for “nontrivial”. As with interactive command shell use, a choice must be made when choosing what tool to use for shell scripting. A very common way to make this choice is to refuse to make a conscious choice, simply defaulting to using the syntax of the interactive command shell the user most often uses. I believe this is a mistake, a poor exercise of judgment — or, more accurately, a failure to exercise judgment at all. A few important criteria spring immediately to mind for what language should be chosen to write scripts:

* Immediacy #### Immediacy Much of the attention of this essay has been, and will be, on choosing “the right tool for the job” in a technical sense. There is also something to be said for choosing “the right tool for right now”, which often suffers under the weight of nontechnical constraints. These are sometimes of overriding importance, so that you may find yourself struggling with some frustratingly bad mismatch between the capabilities of the tool at your disposal and the task you wish to accomplish. Ideally, this should never happen, but we do not live in an ideal world. Sometimes, these problems are purely social, as in the case of having a middle-manager breathing down your neck, demanding that you use one of the corporation's bureaucratically specified Approved Programming Languages. Other times, they are more constrained by the laws of physics and the limitations of biological humanity, as in the case that there is some need to accomplish a task today rather than next week and you simply do not have time to learn a different language to use for accomplishing this task. In either of these cases, you should almost certainly just use the language dictated by circumstances rather than technical suitability to the job at hand. In the first case, the reason you should probably do that is to avoid losing your job. In the second case, the reason you should probably do that is so you get the task done while it is still helpful to do so. I suppose there may be instances where the job or the task at hand is not more important than using “the right tool for the job”, but I certainly will not tell you this is a majority of the time, or even a statistically significant percentage of the time. You, personally, are probably in a much better position than me to decide what sacrifices to make in your personal circumstances. This does not, of course, mean you have carte blanche to write off any alternatives as unimportant forever. What it means is that, if the opportunity ever arises, you should find the time to learn a better tool for that kind of job in the future. This is a matter to handle with rational management of *Priorities* (that should eventually become a link to an essay I have not yet finished writing), not with blind adherence to entrenched biases. #### Portability Portability is, in a sense, the scripting (and, more broadly, programming) equivalent to what I mean by “ubiquity” for interactive command shells in the Interactivity section above. While I would argue that ubiquity in an interactive command shell is generally not a very important criterion for shell selection, except under very rare conditions, portability is much more important for scripting. A popular rule of thumb is that if you are performing a tedious and repetitive task more than twice, you should automate it. D-Tools CEO Adam Stone reportedly phrased it like this: “Anything that you do more than twice has to be automated. I would rather have [my employees] honestly going skiing than do any automated repetitive task.&rdquo Of course, that is a rule of thumb, and not an absolute law. Take things on a case-by-case basis but, when in doubt, lean toward automation. Sometimes you should automate it even if you perform the task only once. You might learn a lesson more valuable than the extra time spent writing a script to automate it, and it might even be the case that you will encounter a tedious and repetitive task that takes longer to accomplish the tedious and repetitive way, even just once, than it would take to automate it. As a corollary to that rule, I would suggest that if you then must use the script at least two times more than the initial planned uses — that is, if you write something to use four times, but you end up using it at least two more times — you should make it portable, if portability has any meaning whatsoever for that tool. Obviously, a tool whose only purpose is to interact somehow with a .cshrc file's specific quirks as they differentiate it from other shell configuration files such as your .shrc file probably does not need to be written in a more-portable language than that of the C shell (though there are other reasons to avoid using the C shell language for scripting). The problem with that corollary is that you should have made it portable before you even used it the first time, because otherwise there may be a lot of rewriting involved in making it portable. As a corollary to the corollary, then, it seems obvious to me that unless there is some overriding reason to do otherwise, you should always write your scripts with some reasonable optimization for portability. This essentially means two things: 1. If there is some way to do something in a way that is not specific to your specific computing environment and circumstances, so that people using other computing environments and working under other circumstances can use it without modification, and that way of doing things is not notably more troublesome than doing it the nonportable way, you should do it the portable way. It is often the case you should do it the portable way even if it is more notably troublesome to do it that way, in fact. 2. All else being roughly equivalent, you should use tools optimized for portability. That is, if there is no overriding reason to use a less portable tool, use the more portable tool. Such overriding reasons tend to involve a tool's suitability to the task at hand. If a nail will just pull out of the pieces of wood you wish to fasten together when the finished product is finished, you should probably use a screw instead, even though nails can be driven by hammers, rocks, and even other pieces of wood (conceivably), while screwdrivers pretty much require a screwdriver. I leave satisfying condition one to you. Condition two, however, is quite relevant to this essay. Given the relative ubiquity of various shells, as described in the Interactivity section above, it should be quite obvious that the most portable shell scripts are those written for sh. Of course, Bash is probably the second most ubiquitous, but that is a much more distant second place than many Linux aficionados imagine. Some GNU Project devotees may feel that Bash should be propagated by any means possible in service to the ideological cause of “Free Software” as defined by Richard Stallman and the Free Software Foundation, but I feel that choosing Bash for one's scripts specifically because it punishes people for using a different open source (or “Free Software”) shell as a way to manipulate them into using Bash is kind of hypocritical coming from someone who advocates for software “freedom”. This rejection of choosing a tool based on license advocacy desires (or other forms of advocacy) because of how it induces others to use it based on licensing is not universal, but applies well to choice of language for shell scripts because of the fairly universal conditions of Unix shell scripting and the fact that your sh implementation might even be a symlink to Bash. As a result, there are really only two reasons that sound good to me, given what I have already said, for choosing any other shell language than sh for shell scripts. One is “immediacy”, as already covered. The other is suitability. In a moment, we will find that is not quite as strong an argument as you might think in many cases. #### Suitability The suitability of a tool to the task at hand, in most cases, trumps immediacy and portability concerns — as long as the suitability gap is sufficiently wide. If one tool is orders of magnitude better suited to a task than another, it probably makes sense to use the more-suitable tool than the less-suitable tool, even if the latter is far more portable and you know the tool better. Learn to use a hammer when you want to pound nails, because a screwdriver just isn't going to do the job safely and well (or so I've heard; I have not been so stubbornly screwdriver oriented as to ignore a hammer when it is time to drive nails). I have, many times, encountered a Bash user claiming that the reason he writes his shell scripts for Bash, not caring whether they are sh-compatible, is the fact that Bash offers more (and better) programming features than sh. If we were living in a world of only two choices (as many people seem to think we do), that might be a good argument, but in the real world we have many choices, and assuming Bash is justified because it offers more programming features than sh is assuming a false dilemma. We have other choices and, in cases where programming features above and beyond what sh provides are necessary, many of those other choices are much better. In fact, some of them are not only technically better suited to tasks where something more than sh is needed, but also more portable. Unfortunately, in my experience, portability arguments are entirely lost on people who doggedly insist that they must use Bash for shell scripting, and they sometimes even get angry at the idea that they should ever consider the possibility that someone using a Unix-like system where Bash isn't available (or would have to be installed just for the use of a script the person wrote) should be accommodated in the design of a shell script that might be useful for many people. My take on the matter is quite simple, actually. Given that sh offers the basic capabilities Unix users have come to expect from their shells when automating shell tasks (notably including conveniently separate redirection capabilities for STDERR and STDOUT, which csh syntax shells lack), the most portable shell available is also sufficiently well suited to the task of simple shell automation, and as such sh should be the shell scripting language of choice *regardless of your preferred interactive command shell*. In any cases where more advanced programming capabilities than sh provides are needed, you are now talking about writing *programs*, and not mere shell scripts. There are even shell scripts you could write in sh that require advanced enough programming language constructs that they should be written in an actual programming language — that is, a language designed first and formost for programming, rather than as an interactive command shell with programming facilities bolted onto it for the sake of scripting the shell environment — even though they still may not quite rise to level of being called by a more momentous term than “script”. In short, if sh is not a suitable tool for the job at hand, you simply should not be using a shell language to write the script or program you wish to write anyway. The four most obvious languages that come to mind as better choices, once sh ceases to be suitable to the task at hand, are all more portable than *any* shell, *including* sh, in fact (and are listed here in alphabetical order):

* C Obviously, the choice of which of these languages (or any other non- shell languages you might prefer) to choose is a matter for language warriors and, apart from the narrow realm of shell preference, not really relevant to the purposes of this essay. Another option that might make sense is a much more C-like scripting language, if such a thing were more widespread, but somehow it simply has not arisen in broad distribution. Perl is closer in many ways than the C shell, and has the advantage of being designed as a programming language rather than an interactive command shell with some programming facilities bolted on for scripting purposes (to say nothing of the other flaws of the csh family of shells for scripting purposes), and the various “Interpreted C” implementations are mutually incompatible, not supported by a strong community, and unpopular in the extreme. ### Design Optimization This is a fairly distinct matter, mentioned in the introduction to this essay, that deserves some brief explanation of its own. The design of an interactive command shell is best accomplished with the purpose of making it a good interactive command shell held in mind as the primary aim of the effort. The design of a programming language should generally be accomplished with the purpose of making it a good programming language held in mind as the primary aim of the effort. Each implies some approaches to doing things that do not apply to the other. For instance, programming languages tend to benefit from very unified principles of syntactic and semantic philosophy. This also tends to yield a certain amount of simplicity in the basic structure of the language, making it easier to learn quickly and use consistently. More sophisticated functionality might be added by way of libraries, for instance, so that those capabilities exist but do not clutter up the core language. Unfortunately, such concerns tend to make the syntax a bit annoying to use at the command line in an interactive manner, as in the case of trying to use a Scheme REPL as an interactive command shell, because structural cues that are helpful to the writing of software (e.g. Scheme's parentheses) can be very annoying to use after a while. By contrast, interactive command shells tend to benefit from a much more practical, fast-and-loose approach to design philosophy, with less clear divisions between different types of tokens. An example of this in action is the way that any program in your execution path can be invoked in a manner syntactically identical to the way shell builtin commands are invoked. In essence, the more tools you put in your execution path, the bigger the language. Such concerns for language design optimization conflict with each other — these, and many more examples of how one or the other family of languages (programming languages or shell languages). Any attempt to make one of them more like the other will tend to undermine its suitability to the former task. This is, in fact, a trap into which Bash has fallen, with various programming facilities built into the language never quite living up to the promise of how nice they are to have in a programming language, and interfering with the smooth design of the interactive command shell language itself. ### Final Thoughts I have arguments to the effect that Bash is kind of like the duck-billed platypus of the shell world. It tries to be and do too much, and ultimately fails at all of it, ending up as just another ugly, ungainly weirdo that lumbered out of the GNU Project. The major difference between Bash and the platypus in this regard is that the platypus, despite all appearances to the contrary, actually has some current ecological niche to which it is well-suited. Bash is a dinosaur that should long since have gone extinct, but its popularity is maintained by the propagandizing efforts of the GNU project and the same kind of fear of change that keeps many MS Windows users from trying out open source Unix-like operating systems in the first place. I know there are many people who will surely object to the lines of reasoning employed within this essay — people deeply attached to their Bash usage, I expect. Some people may have rational objections to some of my lines of argument as they are currently composed, or suggestions for how to make this better. I encourage such people to write to me (Apotheon) via the contact page at: http://blogstrapping.com/contact.rhtml #### Acknowledgements When someone going by the name of OldCoder cut such a discussion short because he had to go to work, he asked me to collect my thoughts on the matter in a form that could be posted online for him to read. While this essay is probably much more of a grand undertaking than he envisioned, it is still ultimately inspired by him, and for that I thank him. I would also like to thank the missus for reading this thing two or three times at various stages of completeness. Her help in refining what I write is always appreciated, even in cases where (like this) there is simply some weakness in the way something is written and no obvious way to fix it. Any such weaknesses in this essay are completely my fault, and not hers, especially considering she is not much of a shell connoisseur in the first place. #### Really Final Thoughts Ultimately, I hope this helps some people realize what I did when I changed the working title of this essay from *Bash Considered Harmful* to *Bash Considered Pointless*: worse than merely causing problems, Bash actually has no continuing excuse to exist apart from inertia. I believe quite strongly that you will wean yourself off Bash, if you know what is good for you, and if you are actually using it regularly in the first place. If you are not using Bash regularly, perhaps this essay will help you shore up your belief that you have made the right decision. |

Continue reading

|

For the next older page, click here For the next newer page, click here |